OF ARTIFICIAL

INTELLIGENCE

Stephanie Haack is director of

communications for the Computer Museum in Boston.

The quest for

artificial intelligence is as modern as the frontiers of computer

science and as old as Antiquity. The concept of a "thinking machine"

began as early as 2500 B.C., when the Egyptians looked to talking

statues for mystical advice. Sitting in the Cairo Museum is a bust of

one of these gods, Re-Harmakis, whose neck reveals the secret of his

genius: an opening at the nape just big enough to hold a priest.

Even Socrates sought the impartial arbitration of a

"thinking machine." In 450 B.C. he told Euthypro, who in the name of

piety was about to turn his father in for murder, "I want to know what

is characteristic of piety ... that I may have it to turn to, and to

use as a standard whereby to judge your actions and those of other men."

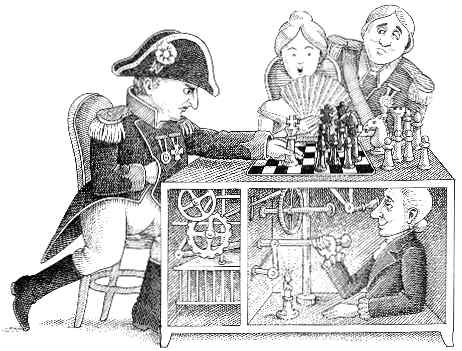

Automata, the predecessors of today's robots, date

back to ancient Egyptian figurines with movable limbs like those found

in Tutankhamen's tomb. Much later, in the fifteenth century A.D.,

drumming bears and dancing figures on clocks were the favorite

automata, and game players such as Wolfgang von Kempelen's Maezel Chess

Automaton reigned in the eighteenth century. (Kempelen's automaton

proved to be a fake; a legless master chess player was hidden inside.)

It took the invention of the Analytical Engine by Charles Babbage in

1833 to make artificial intelligence a real possibility. Babbage's

associate, Lady Lovelace, realized the profound potential of this

analyzing machine and reassured the public that it could do nothing it

was not programmed to do.

Artificial intelligence (AI) as both a term and a

science was coined 120 years later, after the operational digital

computer had made its debut. In 1956 Allen Newell, J. C. Shaw and

Herbert Simon introduced the first AI program, the Logic Theorist, to

find the basic equations of logic as defined in Principia Mathematica

by Bertrand Russell and Alfred North Whitehead. For one of the

equations, Theorem 2.85, the Logic Theorist surpassed its inventors'

expectations by finding a new and better proof.

Suddenly we had a true "thinking machine"-one that

knew more than its programmers.

The Dartmouth Conference

An eclectic array of academic and corporate scientists viewed the

demonstration of the Logic Theorist at what became the Dartmouth Summer

Research Project on Artificial Intelligence. The attendance list read

like a present-day Who's Who in the field: John McCarthy, creator of

the popular AI programming language LISP and director of Stanford

University's Artificial Intelligence Laboratory; Marvin Minsky, leading

AI researcher and Donner Professor of Science at M.I.T.; Claude

Shannon, Nobel Prize-winning pioneer of information and AI theory, who

was with Bell Laboratories.

By the end of the two-month conference, artificial

intelligence had found its niche. Thinking machines and automata were

looked upon as antiquated technologies. Researchers' expectations were

grandiose, their predictions fantastic. "Within ten years a digital

computer will be the world's chess champion," Allen Newell said in

1957, "unless the rules bar it from competition."

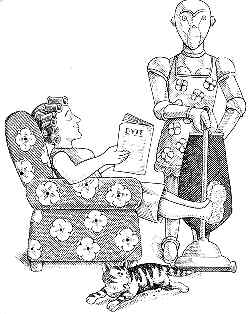

Isaac Asimov, writer, scholar and author of the Laws

of Robotics, was among the wishful thinkers. Predicting that AI (for

which he still used the term "cybernetics") would spark an intellectual

revolution, in his foreword to Thinking by Machine by Pierre de Latil

he wrote:

Many people imagined that by the year 1984 computers would dominate our lives. Prof. N. W Thring envisioned a world with household robots, and B. F. Skinner forecast that teaching machines would be commonplace. Arthur L. Samuel, a Dartmouth conference attendee from IBM, suggested that computers would be capable of learning, conversing and translating language; he also predicted that computers would house our libraries and compose most of our music.

Getting Smarter

Artificial intelligence research has progressed considerably since the

Dartmouth conference, but the ultimate AI system has yet to be

invented. The ideal AI computer would be able to simulate every aspect

of learning so that its responses would be indistinguishable from those

of humans.

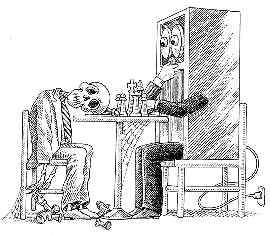

Alan M. Turing, who as early as 1934 had theorized

that machines could imitate thought, proposed a test for AI machines in

his 1950 essay "Computing Machinery and Intelligence." The Turing Test

calls for a panel of judges to review typed answers to any question

that has been addressed to both a computer and a human. If the judges

can make no distinctions between the two answers, the machine may be

considered intelligent.

It is 1984 as this is being written. A computer has

yet to pass the Turing Test, and only a few of the grandiose

predictions for artificial intelligence have been realized. Did Turing

and other futurists expect too much of computers? Or do AI researchers

just need more time to develop their sophisticated systems? John

McCarthy and Marvin Minsky remain confident that it is just a matter of

time before a solution evolves, although they disagree on what that

solution might be. Even the most sophisticated programs still lack

common sense. McCarthy, Minsky and other Al researchers are studying

how to program in that elusive quality-common sense.

McCarthy, who first suggested the term "artificial

intelligence," says that after thirty years of research AI scholars

still don't have a full picture of what knowledge and reasoning ability

are involved in common sense. But according to McCarthy we don't have

to know exactly how people reason in order to get machines to reason.

McCarthy believes that a sophisticated programmed language of

mathematical logic will eventually be capable of common-sense

reasoning, whether or not it is exactly how people reason.

Minsky argues that computers can't imitate the

workings of the human mind through mathematical logic. He has developed

the alternative approach of frame systems, in which one would record

much more information than needed to solve a particular problem and

then define which details are optional for each particular situation.

For example, a frame for a bird could include feathers, wings, egg

laying, flying and singing. In a biological context, flying and singing

would be optional; feathers, wings and egg laying would not.

The common-sense question remains academic. No

current program based on mathematics or frame systems has common sense.

What do machines think? To date, they think mostly what we ask them to.

| CHECKMATE CHALLENGE Designing a chess program is an awesome task. In a 1950 Scientific American article, Claude Shannon argued that only an artificial intelligence program could play computer chess. A computer that explored every possible move and countermove, he said, would have to store a total equal to 10 to the 120th power moves, and "a machine calculating one variation each millionth of a second would require over 10 to the 95th power years to decide on its first move." Shannon, the inventor of information theory, was one of the first to suggest that modern computers are capable of thinking-of performing nonnumerical tasks. It is this capability that lies at the base of all computerized chess games and of AI itself. Many of the first Al programs were programs that played bumbling but legal chess. In 1957 Alex Bernstein designed a program for the IBM 704 that played two amateur games. An alumnus of the Dartmouth Summer Research Project on Artificial Intelligence, Bernstein had been working on the chess system at the time of the conference. Then in 1958 Allen Newell, J. C. Shaw and Herbert Simon introduced a more sophisticated chess program. It still had some bugs, however, and was beaten in thirty-five moves by a ten-year-old beginner in its last official game played in 1960. Arthur L. Samuel of IBM, another Dartmouth conference alumnus, spent much of the fifties working on game-playing AI programs. His passion, checkers, proved a conquerable game, and by 1961 he had a program that could play at the master's level. Samuel believes that an equally good chess program would exist if anyone worked as long as he did on the design. Like most successful Al programs, Samuel's checkers player could learn. When it encountered a position for the second time, the program evaluated the results of its previous reaction in that situation before deciding on the next move. Surely the combined efforts spent on AI chess players equal or surpass Samuel's dedication to checkers, yet no program can consistently beat a world chess champion. Even backgammon, a less popular pursuit with AI programmers, has fared better than chess: the program BKG 9.8 actually won the 1979 world backgammon championship. This begs the question: why hasn't a world-class AI chess player been created? Claude Shannon may have answered that question twenty-five years ago when he suggested that AI programming would "require a different type of computer than any we have today. It is my feeling that this will be a computer whose natural operation is in terms of patterns, concepts, and vague similarities, rather than sequential operations on ten-digit numbers." If Shannon was wrong, M.I.T. professor Edward Fredkin stands to lose a bundle. Fredkin has offered $100,000 to the first computer to beat the world's grand master in chess. S. H. |

Return to Table of Contents | Previous Article | Next Article