TODAY AND

TOMORROW

by Marcian E. Hoff, Jr.

Martian E. Hoff, Jr., is a vice-president for research at Atari, Inc. While at Intel, he developed the first microprocessor: the Intel 4004.

Soon after the integrated circuit was developed, just two decades ago, it became apparent that very complex circuits would be possible. In some cases a major portion of a computer, called a module, could be constructed as a single circuit. In fact, this made it possible to build a large system by breaking it up into a number of different kinds of modules, each of which could be a circuit.

Before long, families of modules were developed. These families were quite versatile, and using the right types of modules allowed for building a very wide variety of equipment.

But the integrated circuit business was changing. Every year it became possible to make more and more complicated circuits. So it became desirable to make more complicated modules. The problem was that an increase in the complexity of a module meant a decrease in its versatility, so that eventually a computer or other piece of equipment would have no more than one of each type of module. The economics of the integrated circuit business made this undesirable. High volume was the goal, but a different type of module for each type of system being built meant that no more than a few hundred or a few thousand of any one type of module would be made.

The microprocessor represented a solution to this problem. It was a new type of module that was very versatile. With some very simple programming techniques, we could make this module appear to be many different kinds of modules. In other words, it could be customized by programming.

Microprocessors, or microcomputers, are just miniature computers. In general, they can be used for most of the applications for which larger computers are used. The earliest microprocessor was very poor in performance, and there were many things it could not do. Over the years, however, performance has been improved so that microprocessors now rival machines that in some cases cost a thousand times more.

Much of the progress in reducing cost and improving performance has been made in the last ten years, since the the energy crunch hit and we were told about making things smaller. For a while, the automobile industry looked at making things smaller, but nobody took it to heart quite the way the semiconductor industry did.

Smaller is better. In the case of the semiconductor industry, smaller is really better. In a typical integrated circuit of today, there are several thousand components interconnected by metal lines. Some circuits have reached the point where they have almost half a million components on one chip.

The fabrication process is fairly straightforward. Start out with a piece of silicon. Apply some photoresist. Expose the photoresist selectively. Develop it, so that it's removed in some places and left in other places. Then, where the silicon is exposed, subject it to various treatments: dip it in acids, bombard it with impurities and change its characteristics. There are a variety of process steps, but they are all based on this photographic definition of components.

How big should the circuit be? In general, the cost of processing a wafer of silicon is somewhat a function of the size of each component, or feature, but the smaller the circuit the less it costs. The actual size will be determined by the average feature size. In each of the detailed pieces of circuitry, how big can they be? There are a variety of steps in making a circuit to determine this feature size.

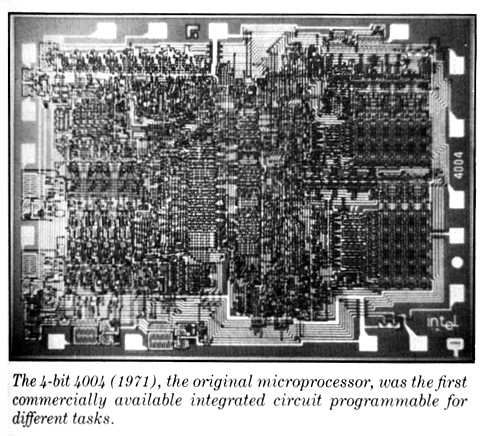

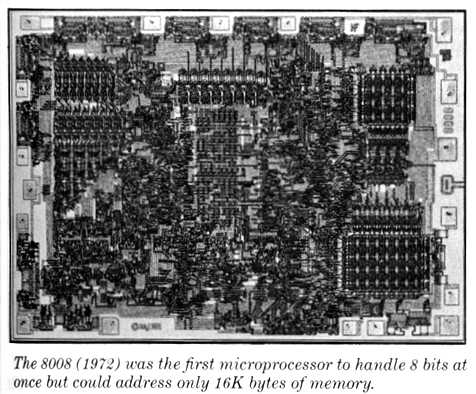

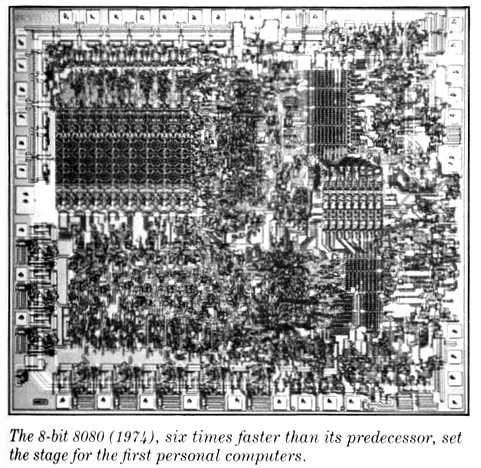

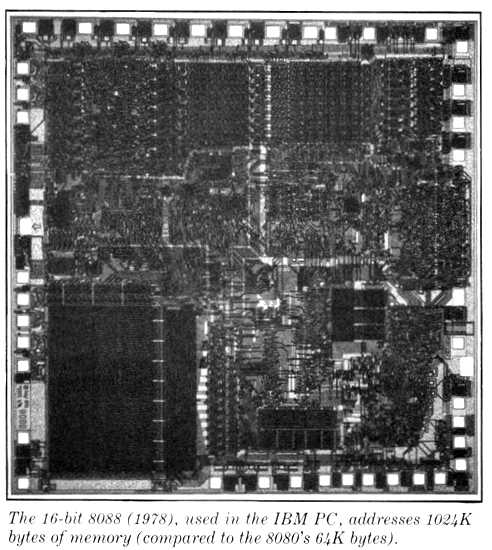

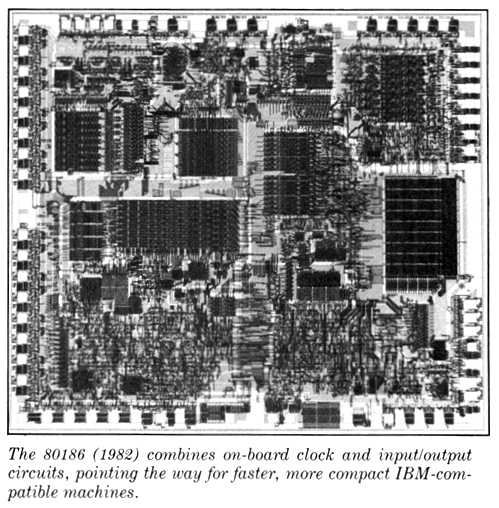

| CHIP GENERATIONS The history of personal computers can be traced through the progress of the microprocessors available as their brains. Each microchip manufacturer has brought out improved progeny within families of processors, retaining similar instruction sets for upward mobility. Though each successive design may not be a quantum leap forward, there is a steady movement toward faster and more powerful handling of more bits of information at a time. Here are members (with introduction dates) of one of the most celebrated microchip families from Intel.      |

Say we want to cut the feature size in half. The linear dimensions of the circuit would be cut in half, the area would be cut in a quarter, and presumably we'd get four times as many circuits for the same amount of money. In this manner, we improve the number of circuits per given amount of silicon. But another step takes place. The circuit runs faster-in fact, it runs twice as fast-and it can do more calculations per second. So now each circuit costs about a quarter as much while it does twice as much work, and we have about an eight-to-one improvement.

Over a ten-year period, typical feature size has gone from about ten microns to about two microns, with an average reduction factor of five. In the most current processes, the typical feature (two microns) is about one ten-thousandth of an inch. On that order of resolution, you could publish a whole Ph.D. thesis in less than a square inch.

We are in a worldwide race for smallness. Essentially everybody interested in integrated circuits is trying to make things smaller. One of the key questions is how far can it go? There are limits imposed by the sheer difficulty of making a circuit. Trying to make it smaller poses further problems, but most of these can be fixed. Some are imposed by lithography. We are getting down to the point where ordinary visible light, the kind we use to look around the room, cannot resolve the features. But there are ways around that. We may go to electrons. Electron microscopes or xrays give higher resolutions. Also, some processing steps have to be resolved, but in general these can be made to work.

Are there any fundamental limits that we have to worry about? Obviously there are some. Atoms have finite size. We are defining features and items that are made of atoms. We cannot get down to subatomic dimensions, which would put the limit maybe a factor of ten thousand away from where we are today. That's a long way, especially if we reduce feature size only by a factor of five every ten years. We don't have to worry about it in our lifetime.

But there is another, much closer limit. The smaller the circuit, the noisier it becomes. We have to reduce the operating voltages, or signals, that represent the information traveling through the circuit. It's like the graininess you get when you blow up a negative in film. As we reduce the size of the circuit, it becomes difficult to distinguish the signals we're looking for from the inherent noise of the circuit behavior.

How far away are we? At the present time, our signals are on the order of a thousand times larger than this kind of noise. But if we reduce the linear dimensions of the circuit by, say, a factor of twenty, the signals will come down to about ten times the size of the noise. At that point, the circuits start to become unreliable. The computer starts to make mistakes. In fact, it starts to make mistakes very, very rapidly as we try to go beyond that dimension. The circuitry is such that almost none of the known techniques for making circuits more reliable will work if we try to go below these dimensions.

Today the failure rates due to circuit noise are such that if all the computers in the world ran from now until the end of the universe, there would be perhaps one mistake. When we reduce today's dimensions by a factor of twenty, we perhaps get to the borderline of usefulness. But this still allows about a factor of ten thousand to one in reduction of cost vs. performance. We have quite a way to go. Considering the amount of effort going on in this area, I think we'll see a push toward that limit. There are some ways we can extend the limit by reducing temperature so that internal friction is reduced, but this tends to reduce convenience. (We'd have to carry a refrigerator around with every circuit.)

I think we can say that a lot more computing power will be available in the near future. What the next generation must decide is how this power will be used. Despite many upheavals, the refinements in the industrial revolution have generally reduced disadvantages and increased advantages. As we develop computers, we can make them a bane or a blessing.

Return to Table of Contents | Previous Article | Next Article