HISTORY'S GREAT

HISTORY'S GREATCOMPUTER

ECCENTRICS

by Marguerite Zientara

Marguerite Zientara is East Coast senior writer for InfoWorld.

Geniuses are often strange, and the geniuses of the computing field are no exception. Though one or another of these characters might seem downright peculiar to us today, it was their ability to see the unseen and ignore others' opinions that opened the way to the computer age.

BLAISE PASCAL (1623-1662)

French philosopher Blaise Pascal, inventor of the first workable automatic

calculator, was strange right from day one. In his first year, according

to the available literature, he was inclined to give way to hysterics at

the sight of water. He was also known to throw tantrums upon seeing his mother

and father together. Arriving at the logical conclusion for their day, the

adults in charge tried to exorcise him from a sorcerer's spell.

In time, Blaise managed to calm himself down and settle

into a respectable infancy. At the age of four he suffered the untimely

death of his mother, and it was then that Etienne Pascal took over his son's

education. This was both good and bad. Etienne's rather uncompromising ideas

encouraged the mental discipline Blaise would need for his later accomplishments,

but they almost smothered his mathematical pursuits.

From the start, Blaise was curious about geometry. His

father, a talented mathematician in his own right, wanted him to study Greek

and Latin first. Locking up all his math books, he warned his friends never

to mention mathematics in front of his son. In the end Blaise managed to

beg from Etienne the most elementary definition of geometry, whereupon he

taught himself its basic axioms-and succeeded, with no guidance whatever,

in proving the Thirty-Second Proposition of Euclid. This was enough to convince

his father, who set about teaching him everything he knew about geometry.

When Blaise was sixteen, Etienne accepted a government

post that called for monumental calculations in figuring tax assessments.

The man who had tried to hold back his son now turned to him for help in

the thankless labor of hand-totaling endless columns of numbers. Blaise soon

formulated the concept for a new calculating machine, then spent eleven years

trying to perfect it. Just before he turned thirty, and after building more

than fifty unsuccessful models, he introduced a working mechanical calculator.

The Pascaline caused a sensation on the Continent (this

despite the fact that it could only add and subtract), but Blaise was unable

to find buyers for his wondrous machine. People said it was too complicated

to operate, sometimes made mistakes and could be repaired only by its inventor.

They also feared it would take jobs away from bookkeepers and other clerks.

After all those years of work, the gadget was a commercial flop.

In 1654, after a profound religious experience, Pascal

renounced the world and all the people in it. Though his contributions to

math and science include the modern theory of probability, advances in differential

calculus and hydraulics, he went on to become one of the greatest mystical

writers in Christian literature. Still, some of his religious views-the

bet on God's existence, for example-grew out of his mathematical insights.

He lived to the age of thirty-nine, when he died of a brain hemorrhage.

GOTTFRIED WILHELM LEIBNIZ (1646-1716)

Sometimes the race goes to the runner-up, not to the man who finishes first.

Case in point: Gottfried Wilhelm Leibniz, who developed a calculator even

better than Pascal's (it could add, subtract, multiply and divide) and went

on to make a killing in the marketplace. Not that he was just in it for

the money: "For it is unworthy of excellent men to lose hours like slaves

in the labor of calculation," he wrote, "which could safely be relegated

to anyone else if machines were used."

While studying for a law degree, Leibniz became curious

about a secret society that claimed to seek the "philosopher's stone." Something

of a wag at twenty, and not above practical jokes, he collected the most

obscure phrases he could find in the alchemy books and composed a nonsensical

letter of application. The society was reportedly so impressed by Leibniz'

erudition that they not only accepted him for membership, but appointed him

their secretary as well.

Leibniz was clever, but he was also overwhelmingly versatile.

Regarded as one of the great universalists of all time, he made his mark

in such diverse areas as law, history, nautical science, optics, hydrostatics,

mechanics, mathematics and political diplomacy. His binary theory and initiation

of symbolic logic laid the foundation for today's computers.

How did the man do so much? First of all, he lived for

seventy years, almost twice as long as Pascal. Second, it is said he could

work anywhere, at any time and under any conditions. He read, wrote and

ruminated incessantly. By all accounts he slept little but well-even during

the nights spent more or less upright in his chair, where he'd remain for

days at a time until he finished the project at hand.

With all these working hours, one might suspect that

Leibniz was something of a hermit. Not so. He enjoyed socializing with all

kinds of people, believing that he could learn from even the most ignorant.

According to his biographers, he spoke well of everyone and made the most

of every situation. Which would explain his notorious theorem of optimism-"Everything

is for the best in this best of all possible worlds"-later satirized by

Voltaire in the novel Candide.

In his last years, however, that celebrated optimism

undoubtedly faded as his fame declined and those close to him gradually slipped

away. When he died in 1716, during an attack of gout, only his secretary bothered

to attend the burial.

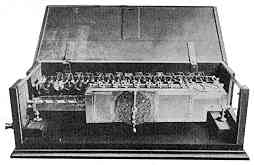

| THE PASCALINE AND THE LEIBNIZ

CALCULATOR Blaise Pascal's pioneering calculator was essentially a two-dimensional machine, using cogged wheels rotated in proportion to numerical values that were added or subtracted. Multiplication or division had to be accomplished by tedious repetition of these operations. Leibniz' calculating machine was an improvement in three dimensions, using wheels at right angles that could be displaced by a special stepping mechanism to perform rapid multiplication or division.

Inside the Pascaline.

Inside the Pascaline.

The inner workings of Leibniz' calculator. |

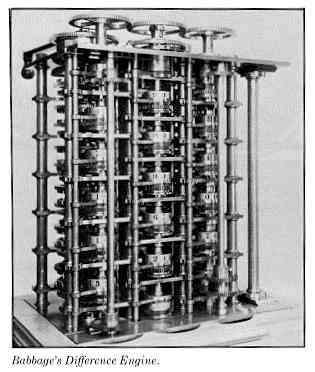

CHARLES BABBAGE (1792-1871)

Mired in the nineteenth century but devoted to an idea that would revolutionize

the world after his death, Charles Babbage was a man who knew his own worth

and his own genius.

Undaunted by the failure of early inventions (among

them, shoes for walking on water), he proceeded to work on his Difference

Engine. This sophisticated mechanical calculator would preoccupy him for

twenty years, during which he managed to convince the Chancellor of the

Exchequer to lend financial support and became the first recipient of a

government grant for computer science. Following a nervous breakdown and

other major crises, in 1833 he gave up on the machine, then conceived an

even more grandiose scheme.

For the rest of his life Babbage would attempt to construct

his Analytical Engine, a mechanical device that would have included most

of the essential features of modern digital computers: a central processing

unit, software instructions, memory storage and printed output. He spent

years making detailed drawings, inventing machine tools and actually trying

to build his machine-only to concede finally that his concept was way beyond

the technology of the time.

Most people thought he was crazy to spend so much time

and money on an obviously absurd idea (even the British government kept

withdrawing its support), but in fact the Analytical Engine was just one

of Babbage's myriad concerns. Fortunate enough to have been born into wealth,

he enjoyed the luxury of time to follow his many other interests: consulting

for railroad pioneers, devising a mail delivery system based on wires strung

between towers to carry containers of mail, and inventing a lighthouse flashing

system and a submarine. He also had the distinction of being able to pick

any lock.

Yet the government positions and honors Babbage felt

were his due constantly eluded him. These disappointments and slights led

to deep bitterness, revealed in his oft-quoted observation that he had never

had a happy day in his life. A friend of his wrote: "He spoke as if he hated

mankind in general, Englishmen in particular and the English Government

most of all."

Part of Babbage's proclaimed unhappiness undoubtedly

came from his notorious feud with London's organ grinders, then estimated

to number a thousand, whom he tried to silence with a court action. In retaliation,

the organ grinders came from miles around to play their noisy instruments

in front of his home, at times joined by street punks who tossed dead cats,

blew bugles at him and smashed his windows. Jeering children followed him

around, as did bands of hecklers-sometimes as many as a hundred at a time,

shouting threats to overturn the cab he was riding in or to burn down his

house.

Still, idiosyncrasies and all, Babbage was considered

a fascinating companion. He was a witty and sought-after dinner guest and

often hosted parties for two or three hundred people. Among his friends

were the most notable scientific, political and literary figures of the

day, including Charles Dickens.

One of his most intriguing friendships was with Lady

Ada Augusta Lovelace, the only daughter of Lord Byron. A beautiful and charming

woman, Lady Lovelace was the same age Babbage's deceased daughter would

have been. The widower Babbage and Lady Lovelace, who had been brought up

without her father, enjoyed a close, mutually rewarding relationship until

her death at age thirty-six. Babbage himself died at seventy-nine, with

only one mourner besides the family group at the funeral. At the end he

was considered to have been a failure with an unworkable idea. Yet on the

moon, which computers enabled man to reach, is a crater named for Charles

Babbage. And the Royal College of Surgeons in England has preserved the brain

of this man who lived before his time.

ADA LOVELACE: THE FIRST COMPUTER PROGRAMMER

Ada! Wilt thou by affection's law,

My mind from the darken'd past withdraw?

Teach me to live in th future day ...

-Lord Byron

Today's computer programmers are clever indeed,

but few could hold a light-emitting diode to Augusta Ada Lovelace in terms

of creativity. She saw the art as well as the science in programming, and

she wrote eloquently about it. She was also an opium addict and a compulsive

gambler.

As the only legitimate offspring of Lord Byron, Ada

would have been a footnote to history even if she hadn't been a mathematical

prodigy. Though Byron later wrote poignantly about his daughter, he left

the family when she was a month old and she never saw him again. Biographers

tend to be rather hard on Ada's mother, blaming her for many of her daughter's

ills. A vain and overbearing Victorian figure, she considered a healthy

daily dose of laudanum-laced "tonic" the perfect cure for Ada's nonconforming

behavior.

Ada was well tutored by those around her (British logician

Augustus De Morgan was a close family friend), but she hungered for more

knowledge than they could provide. She was actively seeking a mentor when

Charles Babbage came to the house to demonstrate his Difference Engine for

her mother's friends. Then and there, Ada resolved to help him realize his

grandest dream: the long planned but never constructed Analytical Engine.

Present on that historic evening was Mrs. De Morgan, who wrote in her memoirs:

If Babbage was the force behind the hardware of the

first protocomputer, Ada was the creator of its software. As a mathematician,

she saw that the Analytical Engine's possibilities extended far beyond its

original purpose of calculating mathematical and navigational tables. When

Babbage returned from a speaking tour on the Continent, Ada translated the

extensive notes taken by one Count Manabrea in Italy and composed an addendum

nearly three times as long as the original text. Her published notes are

particularly poignant to programmers, who can see how truly ahead of her

time she was. Prof. B. H. Newman wrote in the Mathematical Gazette that

her observations "show her to have fully understood the principles of a

programmed computer a century before its time." Among her programming innovations

for a machine that would never be realized in her lifetime were the subroutine

(a set of reusable instructions), looping (running a useful set of instructions

over and over) and the conditional jump (branching to specified instructions

if a particular condition is satisfied).

While the rest of the party gazed at this beautiful instrument with

the same sort of expression and feeling that some savages are said to have

shown on first seeing a looking glass or hearing a gun, Miss Byron, young

as she was, understood its workings an saw the great beauty of the

invention.

Ada also noted that machines might someday be built

with capabilities far beyond the technology of her day and speculated about

whether such machines could ever achieve intelligence. Her argument against

artificial intelligence in "Observations on the Analytical Engine" was immortalized

almost a hundred years later by Alan M. Turing, who referred to this line

of thinking as "Lady Lovelace's Objection." It is an objection often heard

in debates about machine intelligence. "The Analytical Engine," Ada wrote,

"has no pretensions whatever to originate anything. It can do whatever we

know how to order it to perform."

It is not known how or when Ada became involved in her

clandestine and disastrous gambling ventures. Unquestionably, she was an

accomplice in more than one of Babbage's schemes to raise money for his Analytical

Engine. Together they considered the profitability of building a tic-tac-toe

machine and even a mechanical chess player. And it was their attempt to develop

a mathematically infallible system for betting on the ponies that brought

Ada to the sorry pass of twice pawning her husband's family jewels to pay

off blackmailing bookies.

Ada died when she was only thirty-six years old, but

she is present today in her father's poetry and as the namesake of the government's

newest computer language. In the late 1970s the Pentagon selected for its

own a specially constructed "superlanguage," known only as the "green language"

(three competitors were assigned the names "red," "blue" and "yellow") until

it was officially named after Ada Lovelace. Ada is now a registered trademark

of the United States Department of Defense.

HOWARD RHEINGOLD

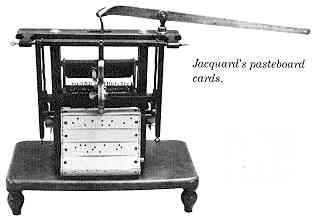

IN THE CARDS The use of punch cards in computers was devised by Charles Babbage, who adapted the automated textile loom invented in 1805 by Frenchman Joseph Marie Jacquard. The Jacquard pasteboard cards had holes punched out of them to allow only certain threads to be grasped by the rods that did the weaving. In Babbage's computer, moving rods would decipher two sets of cards: one to designate the operations to be performed, the other for the variables on which they were to operate. Punch cards would remain in widespread use until the development of fast, reliable magnetic mass storage media in the 1960s.

|

ALAN M. TURING (1912-1954)

One of the great abstract thinkers of this century, Alan M. Turing was

another eccentric who started out as an extremely gifted student. As early

as age nine, he reportedly startled his mother by asking questions like

"What makes oxygen fit so tightly with hydrogen to produce water?"

By the time he reached Cambridge University, Turing

was already known for his unorthodox ways. When setting his watch, he did

not simply ask someone the correct time but instead observed a specific

star from a specific locale and mentally calculated the hour. He jogged long

distances in a time when this was considered odd, and he thought nothing

of riding his bicycle twelve miles through a rainstorm at night to keep

an appointment. (When his bicycle chain was skipping, instead of fixing

the chain he correctly calculated the exact moment of each skip and pedaled

accordingly.)

While some might describe his methods as doing things

the hard way, for Turing they were merely a game. The first proof of his

brilliance came in 1936, when at the age of twenty-four he published his

paper "On Computable Numbers, with an application to the Entscheidungsproblem."

In this major contribution to computing theory, Turing presented a landmark

theorem in mathematical logic in terms of an idealized computing machine.

He posited that a "Universal Turing Machine" could embody any logical procedure

as long as it was given appropriate instructions. A decade before the first

practical computer, he described its essential characteristics.

Turing's findings were regarded by some as proof that

human intelligence is superior to machine intelligence, but this was not

his idea at all. In his essay "Can a Machine Think?" published in the mid-1940s,

he wrote: "We too often give wrong answers to questions ourselves to be

justified in being very pleased at such evidence of fallibility on the part

of the machines." His essay also contained the notion that "there might be

men cleverer than any given machine, but, then again, there might be other

machines cleverer again, and so on."

During World War II Turing was one of a team of British

scientists sequestered at the lovely Bletchley Park estate and ordered to

develop machinery that could decipher codes from Germany's Enigma encoding

machines. The results of these efforts, often credited with a decisive role

in winning the war, were the electromechanical machines nicknamed Heath

Robinson (after the 1930s cartoonist of the Rube Goldberg school), Peter

Robinson, the Robinson and Cleaver (both named after London stores) and

the Super Robinson. The successor to these machines, the Colossus 1, is

recognized as one of the first electronic computers. Turing went on to help

design the Automatic Calculating Engine (ACE) Pilot computer and later worked

on the Manchester Automatic Digital Machine (MADM), one of the earliest stored-program

computers. In 1951 and 1952 he took part in radio debates on the computer's

ability to think.

Arrested in 1952 for "gross indecency," Turing was subjected

to hormone injections that rendered him impotent. His homosexuality was

considered a security risk at the height of the cold war. His last extraordinary

act was to kill himself, in 1954, at the age of forty-two. Whether on purpose,

as the coroner ruled, or accidentally, as many believed, he died of poisoning

from potassium cyanide.

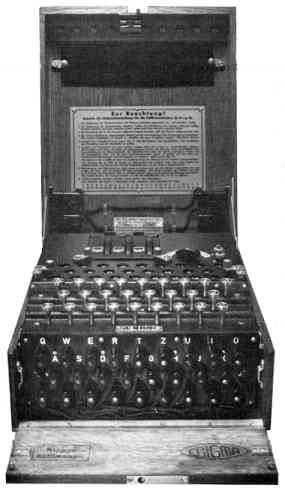

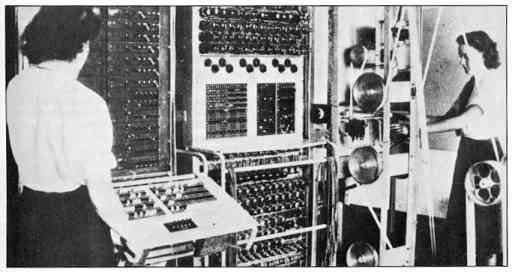

CRACKING THE NAZI CODE The Enigma code machine (left) gave the Nazis a decided advantage

in the early days of World War II by keeping German front-line communications

undecipherable to the Allies. Though one of the first of these machines

was captured and its messages decoded by the Poles, subsequent improvements

in Enigma's design-incorporating frequent changes in the keys by which characters

were enciphered-rendered its output a deadly mystery.

The Enigma code machine (left) gave the Nazis a decided advantage

in the early days of World War II by keeping German front-line communications

undecipherable to the Allies. Though one of the first of these machines

was captured and its messages decoded by the Poles, subsequent improvements

in Enigma's design-incorporating frequent changes in the keys by which characters

were enciphered-rendered its output a deadly mystery.The British government hoped to break the codes through high-speed automated transposition of ciphered characters to find their underlying patterns. Eventually these efforts led to the construction of the Colossus 1 (pictured below). The machine was cloaked in secrecy; after helping to crack the code and revealing invaluable information that hastened the Allies' victory, it was dismantled for reasons of security. The only known surviving part is the paper tape feeder wheel (shown here), used for many years as a paperweight before being donated to the Computer Museum in Boston.

|

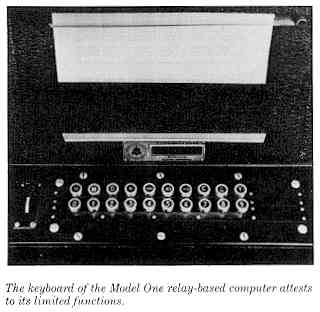

MODEL ONE: THE FIRST MODERN BINARY COMPUTER

Non-eccentrics have also made their mark on the history of computers. In February 1983, forty-five years after he developed the world's first binary computer, Dr. George Stibitz was finally recognized by his peers and invited to join the National Inventors Hall of Fame. At the time of his induction, he was hard at work on four different major projects at Dartmouth Medical School in Hanover, New Hampshire.

Dr. Stibitz constructed his machine to solve a manpower problem at Bell Laboratories. In 1937 the phone company had begun to rely on complex numbers in the equations that computed characteristics of filters and transmission lines. Thirty people worked full time at the impossible task of performing these computations on large, bulky desk calculators, the only tools available that could work with i, the square root of minus one.

Looking for a more efficient way to get the job done, Stibitz experimented at home with the concept of building a calculator that would automatically solve complex arithmetic problems with binary computations. The most convenient parts available were the old-fashioned electromechanical telephone relays: because they could be switched either on or off to represent 0s and 1s, the relays were ideal for performing binary calculations. Satisfied that his concept would work, Stibitz convinced his boss to build a full-scale working model.

By 1939 the Model One went into operation, chopping

off two-thirds of the time needed to solve the equations. The machine consisted

of two large banks of telephone relays, each bank measuring eight feet in

height, five feet across and a foot thick. Each relay was five inches long

and one and a half inches wide. The left bank handled the complex values,

while the right bank juggled the integer numbers. Then the two banks were

integrated for the final solution. The fact that it held only 32 bytes of

memory (1/32K), and that it cost a whopping $20,000 to build, took nothing

away from its success.

Bell went on to build bigger and better versions of

the relay-based computer, but this technology was dated almost as soon as

it was assembled. Relay-based computers were more reliable than vacuum-tube-blowing

behemoths like ENIAC, but the relays were outclassed in calculating speed

by about 500 to 1.

All of today's computers can trace their roots back to

Stibitz' talent and imagination. His binary computer, U.S. Patent No. 2,668,661

issued in 1939, now takes its place next to other great American inventions

like the Model-T, the cotton gin, the electric light and the Wright brothers'

plane.

ROE R. ADAMS III

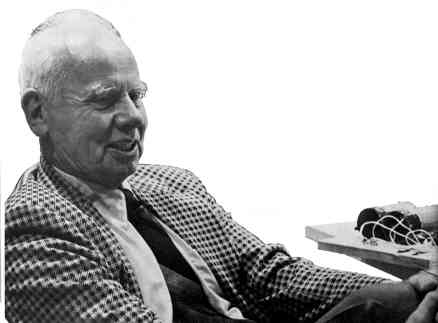

George Stibitz with his first binary adder.

Return to Table of Contents | Previous Article | Next Article